3BI: Back! Systems vs Goals, Base-Rate Fallacy, Bayesian Thinking

The newsletter is back! I took a break for most of the summer due to a lot of other things going on, like…

Getting married!

And honeymooning in Greece!

But I’m back and excited to start writing more again. More on that below.

Systems vs Goals

An approach I’m taking to increase the consistency of my newsletters, and writing overall, is focusing on systems over goals.

As I got overwhelmed managing work, wedding planning, taking care of a puppy, and everything else with life last spring and summer, I remained committed to writing and sending my newsletter out with regularity. Each week, however, I would get too busy with other things, reach Friday with nothing done yet, and end up skipping while telling myself “next week will be better.”

Part of the problem was simply a lack of time and energy to commit to it with everything else going on, and eventually I decided I needed to shift priorities and take a break.

Another issue, though, was too much focus on a specific goal rather than the system required to get there. Instead of implementing consistent reading and writing habits that led to newsletters, I was fixated on the end product of “finished newsletter sent out on Friday.” With my mind anchored to that deadline, I found it too easy to skip working on it earlier in the week. When I was less busy, this wasn’t an issue, but it became a major barrier when there were many other priorities competing for my time and energy.

This is a classic example of “systems vs goals.” A goal is a clearly defined outcome we want to achieve, while a system is a clearly defined process. It’s easy to set a goal, and that’s often the first step we take to achieve something. It’s harder to define a system to get there, though, and even harder to execute it consistently.

The system is much more important than the goal, though. You can set a goal, but are unlikely to achieve it without a system to get there. You can define and execute a system, however, and end up with a desirable outcome even if it’s not an explicitly defined as a goal. Per author James Clear:

…if you completely ignored your goals and focused only on your system, would you still succeed? For example, if you were a basketball coach and you ignored your goal to win a championship and focused only on what your team does at practice each day, would you still get results?

I think you would.

The goal in any sport is to finish with the best score, but it would be ridiculous to spend the whole game staring at the scoreboard. The only way to actually win is to get better each day. In the words of three-time Super Bowl winner Bill Walsh, “The score takes care of itself.” The same is true for other areas of life. If you want better results, then forget about setting goals. Focus on your system instead.

Systems also have a psychological benefit in that they break down a daunting outcome into smaller and more achievable steps. For example, behavioral economics researchers studied the effect of breaking savings goals into smaller chunks with the robo-saving app Acorns:

On the enrollment screen, users were randomly assigned to one of three categories. Some were asked if they would like to a save $5 every day, some were asked if they wanted to save $35 a week, and some were asked if they wanted to save $150 a month. While only 7% opted to save $150 a month, nearly 30% decided to save $5 a day. That’s a huge shift in choices, especially since the amounts are all essentially equivalent. Saving $5 a day makes us think about skipping a Starbucks latte (that seems doable), while $150 a month makes us think about car payments, which is a more daunting amount to give up.

So, now I’m shifting that focus back to systems. My priority is building a habit of reading and writing every day, even if it’s just a small amount. When enough of that writing accumulates for a newsletter, I’ll send it out. While I still have a goal of a new newsletter each week, I’m prioritizing execution of the system to get there instead.

Newsletters may still be a little inconsistent in the short term, but in getting back into a good system, they’ll be more frequent.

The Base-Rate Fallacy

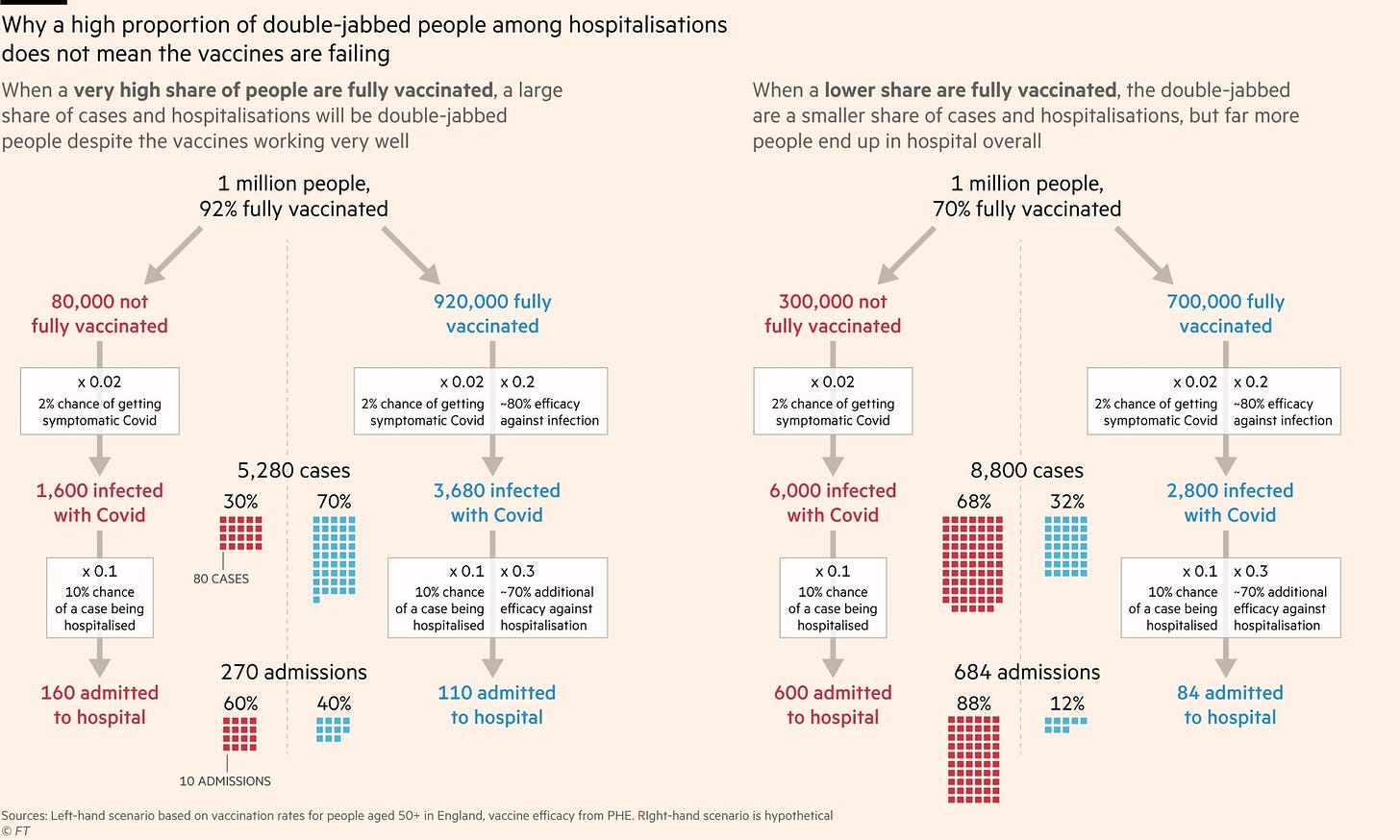

You may have seen some alarming headlines over the summer about a higher than expected number of fully vaccinated people being infected or hospitalized with COVID-19. That may feel confusing and somewhat alarming - if the vaccines are as good as the data from research studies suggest, shouldn’t the share of the vaccinated sick enough to be in the hospital be much lower?

Much of the confusion and alarm stems from the base-rate fallacy (also known as base rate neglect), where we misjudge the likelihood of an event by overvaluing specific individual data points while ignoring or minimizing its baseline probability. Put more simply, it’s when we evaluate the likelihood or frequency of something by using the wrong denominator or base population.

Let’s look at an example known as the prosecutor’s fallacy:

Rather than ask the probability that the defendant is innocent given all the evidence, the prosecution, judge, and jury make the mistake of asking what the probability is that the evidence would occur if the defendant were innocent (a much smaller number).

A famous example is the trial of Sally Clark in the UK. In 1996, Clark’s newborn son died a few weeks after being born. A year later, the same tragedy occurred with her second child, and Clark was accused of double murder.

Her defense lawyers argued that both children died of SIDS, or sudden infant death syndrome. The prosecution countered that with statistics:

An expert hired by the prosecution, paediatrician Roy Meadow, estimated that the SIDS rate for a family like Clark’s was around 1/8,500. The probability of Clark’s innocence was, therefore, according to Meadow, the square of that number, or around 1/70,000,000. That number was apparently enough evidence for the jury, who found Clark guilty.

That reasoning behind that data was flawed and an example of the prosecutor’s fallacy:

Let’s say we believe the assumption that the true probability of 2 SIDS deaths in a family like Clark’s is 1/70,000,000. However, this number is not, as the prosecutors argued, the probability of Clark’s innocence, given the two deaths. Instead, it is the probability of the evidence (the two deaths), given Clark’s innocence, and this is a crucial distinction.

If we want to compute the relevant statistic, the probability of Clark’s innocence given the two deaths, here is one way to do it: take a base population of all families with two children who both died. For what fraction of that base population did the two children die of natural causes? That number turns out to be around 2/3. In other words, the probability that Clark is a murderer is only 33%, which tells a completely different story than the statistic quoted by the prosecution.

This is the base-rate fallacy: the wrong base population was used to calculate the conditional probability. If you’re ever accused of a crime you didn’t commit, make sure a good statistician is a part of your defense. (Thankfully, Clark was released three years later, though unfortunately it was not due to the egregious misapplication of statistics, but the discovery that the prosecution witheld evidence.)

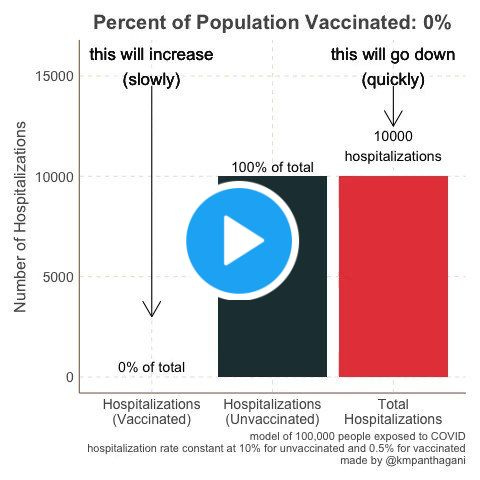

How does this apply to vaccines? As the base rate of vaccinated people grows, it is inevitable that a higher rate of infections, hospitalizations, and deaths occur among them! In a theoretical population where 100% of people are vaccinated, than every infection would occur in a vaccinated person. See this graphic from the Financial Times:

Or, see this video below for a visual demonstration:

It’s not intuitive, but a high proportion of infections, hospitalizations, or deaths in the vaccinated is not a sign that vaccines aren’t working, but more often a downstream effect of more people getting their shots.

When Bayesian Thinking Goes Wrong

I really like this quote by Prof Francois Balloux, director of the University College London Genetics Institute, and its implications for decision-making:

Our brains work in a Bayesian way – we have priors that influence how we regard new information. As a scientist, it is very important not to have overwhelmingly strong priors – you need to be open to surprise and to let your priors be updated by new data. It’s important to engage with new evidence. Being dogmatic is problematic.

“Bayesian” refers to a method of reasoning based on Bayes’ Theorem. What we know and think about something is influenced by previous information that’s relevant and changes as new information is received. Here’s an example:

Let’s imagine that you and a friend have spent the afternoon playing your favorite board game, and now, at the end of the game, you are chatting about this and that. Something your friend says leads you to make a friendly wager: that with one roll of the die from the game, you will get a 6. Straight odds are one in six, a 16 percent probability. But then suppose your friend rolls the die, quickly covers it with her hand, and takes a peek. “I can tell you this much,” she says; “it’s an even number.” Now you have new information and your odds change dramatically to one in three, a 33 percent probability. While you are considering whether to change your bet, your friend teasingly adds: “And it’s not a 4.” With this additional bit of information, your odds have changed again, to one in two, a 50 percent probability. With this very simple example, you have performed a Bayesian analysis. Each new piece of information affected the original probability, and that is Bayesian [updating].

Our minds naturally work in a casually Bayesian way. We constantly encounter new information and add it to our existing knowledge to enhance our thinking and decision-making, meaning our opinions don’t exist in a vacuum.

The problem is that our prior information isn’t always accurate. Formal Bayesian analysis means calculating conditional probabilities with real evidence (including using the proper base rates!), but our minds lack the capacity to do that for every decision or opinion. Instead, we make informal caculations and get stubbornly attached to previous decisions. This means our priors get too strong and our Bayesian reasoning turns into confirmation bias.

Going back to Balloux’s point, it’s important to not hold our prior knowledge too closely and be receptive to evidence disproving it. There’s more information in the world than we could possibly know at one time, and the odds that we’re wrong about something are high. Being open to new evidence means being open to everything, even when it conflicts with our current beliefs.

For more on Bayesian thinking and its rival Frequentist inference, check out the video below:

Have a great weekend!